The billion-dollar startup with a different idea for AI

A billion dollars in startup funding for a company that employs 12 people is an indication that investors still have faith in AI. But the founder of the startup in question – AMI Labs’ Yann LeCun – believes that the breed of technology we currently term AI (large language models) is not the way through which it will develop meaningful and long-term results.

Yann LeCun left his post as chief AI scientist at Meta late last year and founded Advanced Machine Intelligence Labs (AMI Labs) which, he asserts, will remain a research organisation not expected to produce a saleable product for maybe five years. The team at AMI Labs are concentrating not on huge, general-purpose language-based models, but AIs that comprise of collections of modular components, trained for and operating in specific use-cases.

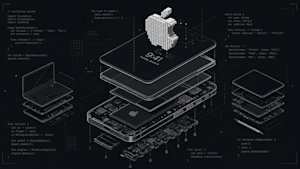

LeCun’s proposed system of artificial intelligence would comprise of the following types of elements:

a world model specific to the domain in which the AI would operate. This might be industry-specific, or perhaps more likely, role-specific,an actor that proposes steps to take next, based on classical reinforcement learning,a critic that analyses the different options drawn from the world model and based on short-term memory, and assess the proposed steps according to hard-coded rules,a perception system that would be specific to the AI’s use: video or audio data, text, images, and so on using, for example, deep learning vision recognition algorithms,a short-term memory,a configurator that would orchestrate the movement of information between each of the above.

Unlike large language models that have been trained on only one source of information (the text scraped from the internet), each instance of LeCun’s AI would be given directed data relevant only to their environment and purpose. In each version, the importance of each module might be set differently. For example, the critic module would be more comprehensive in areas that operate with sensitive information, or the perception module would be paramount in systems that need to react to real-world events quickly.

Each module would be trained in ways that relevant to the AI’s particular field. There have been several successful instances of this in the past, such as machine-learning systems that can teach themselves how to play a video or board game, for example. These are in contrast to the large language models that underpin the vast majority of what we currently talk about when we talk about AI.

LLMs are trained as generalists, creating best-guess answers based on what they have ingested, which are then subject to tweaking either by prompt engineering via software wrappers (Claude Code being the most well-known recently), or at a deeper level by means of reasoning models (the ‘thinking out loud’ portion of basic responses fed back into the AI’s prompt before the user sees the final answers.)

The financial implications of AIs produced by the type of methods proposed by AMI Labs will be interesting to the current AI industry – assuming Yann LeCun’s ideas produce fruitful and viable results. Large language models from big technology providers (Anthropic, Meta, OpenAI, Google et al.) have consumed more resources with each iteration over the last five years. In addition to early-stage model size growth, the recursive prompting necessary to improve outputs from their later versions means that training and running large models becomes increasingly expensive, and only huge enterprises can afford to run them at a financial loss.

The smaller, focused modules inside AMI Labs’ proposed solution could be run on fraction of the GPU power currently necessary for giant LLMs, or even on-device. Instead of the hundreds of billions of parameters models used by ChatGPT, for example, specialist models – that don’t need to be generalists – should need only a few hundred million parameters. This, and an assumption that the cost of computing will generally fall, mean that local, cheap, and inherently more accurate AI may be only a short step away.

A startup with a new idea garnering enormous amounts of financial backing is nothing new in technology’s recent history. But at least part of LeCun’s strategy is based on his belief that current large language models cannot improve significantly enough to realise the aspirational claims made by their creators. AMI Labs seems to be offering investors a way that AI can perform successfully at some stage in the near future with an manageable cost, using a different architecture from the current norm. It’s a different proposition from what’s currently on the table from today’s AI behemoths, but the message of future potential is similar.

(Image source: “Perspective on Modular Construction” by sidehike is licensed under CC BY-NC-SA 2.0.)

Want to learn more about AI and big data from industry leaders? Check out AI & Big Data Expo taking place in Amsterdam, California, and London. The comprehensive event is part of TechEx and co-located with other leading technology events. Click here for more information.

AI News is powered by TechForge Media. Explore other upcoming enterprise technology events and webinars here.