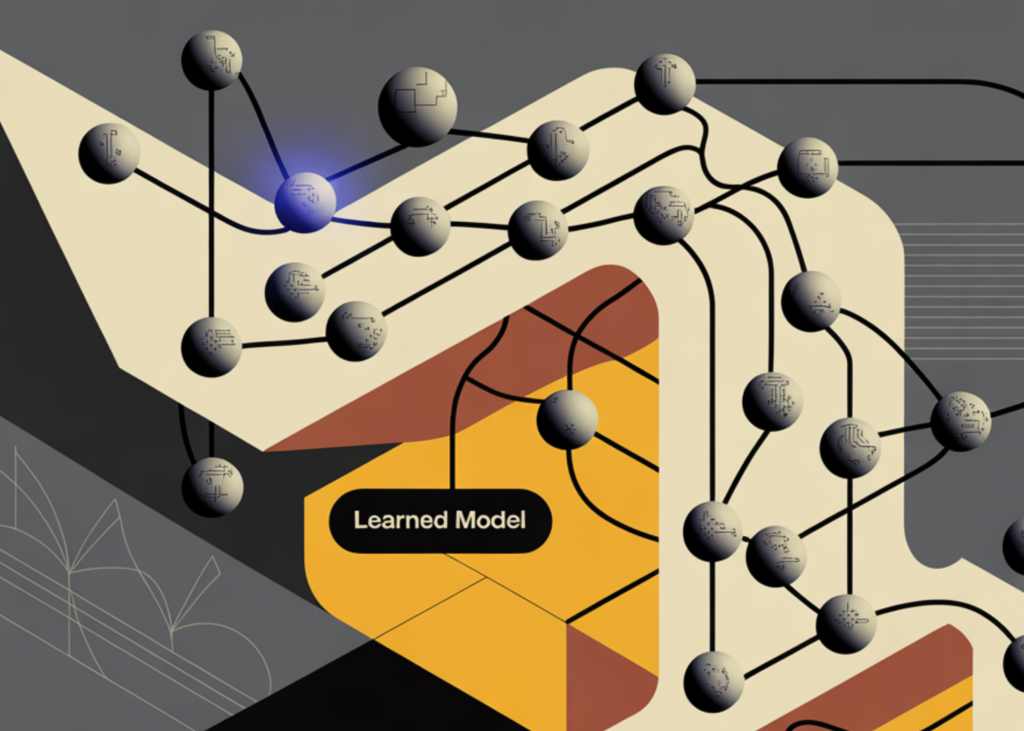

Meta AI and KAUST Researchers Propose Neural Computers That Fold Computation, Memory, and I/O Into One Learned Model

Researchers from Meta AI and the King Abdullah University of Science and Technology (KAUST) have introduced Neural Computers (NCs) — a proposed machine form in which a neural network itself acts as the running computer, rather than as a layer sitting on top of one. The research team presents both a theoretical framework and two working video-based prototypes that demonstrate early runtime primitives in command-line interface (CLI) and graphical user interface (GUI) settings.

What Makes This Different From Agents and World Models

To understand the proposed research, it helps to place it against existing system types. A conventional computer executes explicit programs. An AI agent takes tasks and uses an existing software stack — operating system, APIs, terminals — to accomplish them. A world model learns to predict how an environment evolves over time. Neural Computers occupy none of these roles exactly. The researchers also explicitly distinguish Neural Computers (NCs) from the Neural Turing Machine and Differentiable Neural Computer line, which focused on differentiable external memory. The Neural Computer (NC) question is different: can a learning machine begin to assume the role of the running computer itself?

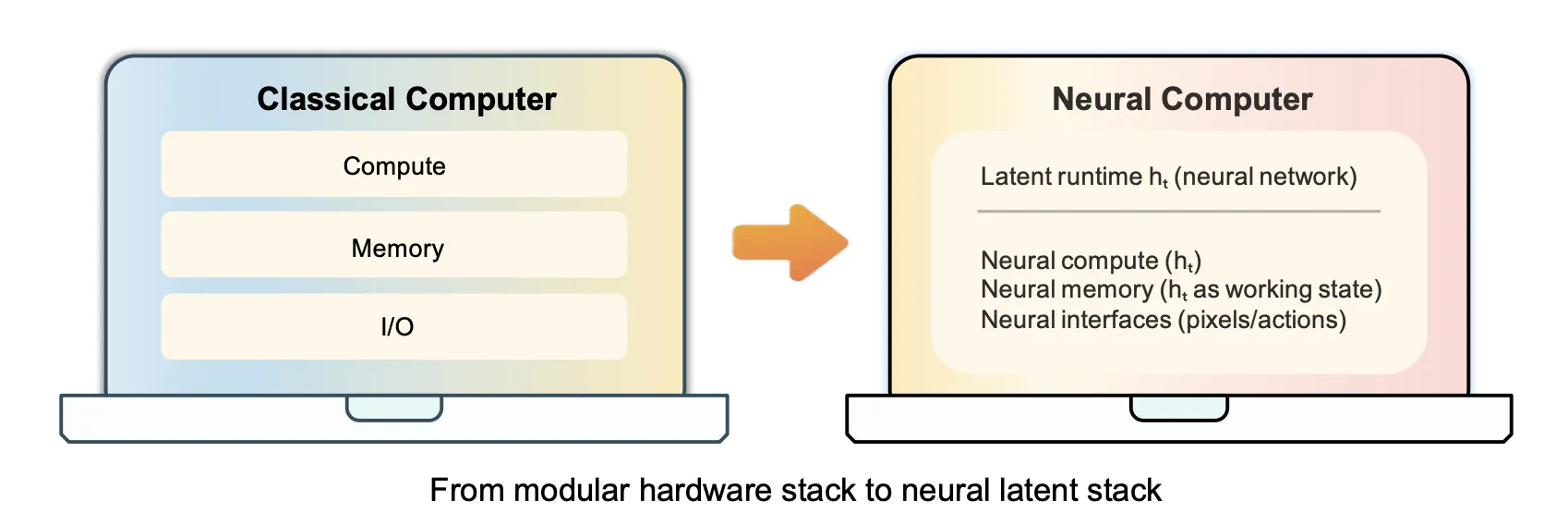

Formally, an Neural Computer (NC) is defined by an update function Fθ and a decoder Gθ operating over a latent runtime state ht. At each step, the NC updates ht from the current observation xt and user action ut, then samples the next frame xt+1. The latent state carries what the operating system stack ordinarily would — executable context, working memory, and interface state — inside the model rather than outside it.

The long-term target is a Completely Neural Computer (CNC): a mature, general-purpose realization satisfying four conditions simultaneously — Turing complete, universally programmable, behavior-consistent unless explicitly reprogrammed, and exhibiting machine-native architectural and programming-language semantics. A key operational requirement tied to behavior consistency is a run/update contract: ordinary inputs must execute installed capability without silently modifying it, while behavior-changing updates must occur explicitly through a programming interface, with traces that can be inspected and rolled back.

Two Prototypes Built on Wan2.1

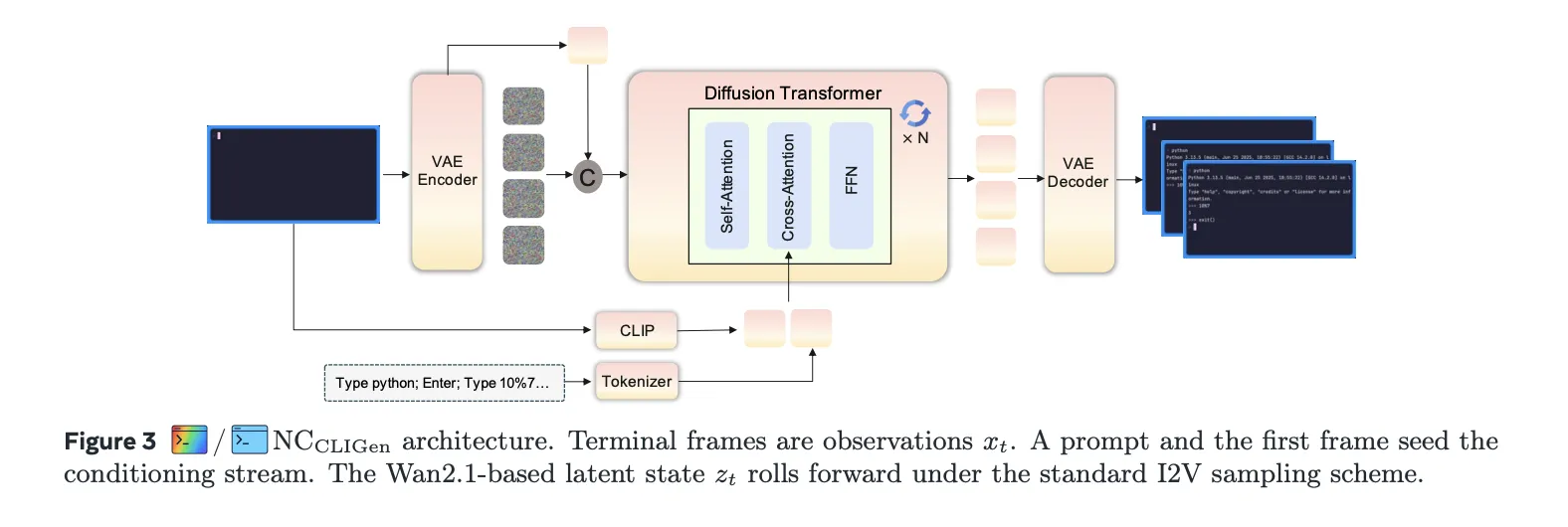

Both prototypes — NCCLIGen and NCGUIWorld — were built on top of Wan2.1, which was the state-of-the-art video generation model at the time of the experiments, with NC-specific conditioning and action modules added on top. The two models were trained separately without shared parameters. Evaluation for both runs in open-loop mode, rolling out from recorded prompts and logged action streams rather than interacting with a live environment.

NCCLIGen models terminal interaction from a text prompt and an initial screen frame, treating CLI generation as text-and-image-to-video. A CLIP image encoder processes the first frame, a T5 text encoder embeds the caption, and these conditioning features are concatenated with diffusion noise and processed by a DiT (Diffusion Transformer) stack. Two datasets were assembled: CLIGen (General), containing approximately 823,989 video streams (roughly 1,100 hours) sourced from public asciinema.cast recordings; and CLIGen (Clean), split into approximately 78,000 regular traces and approximately 50,000 Python math validation traces generated using the vhs toolkit inside Dockerized environments. Training NCCLIGen on CLIGen (General) required approximately 15,000 H100 GPU hours; CLIGen (Clean) required approximately 7,000 H100 GPU hours.

Reconstruction quality on CLIGen (General) reached an average PSNR of 40.77 dB and SSIM of 0.989 at a 13px font size. Character-level accuracy, measured using Tesseract OCR, rose from 0.03 at initialization to 0.54 at 60,000 training steps, with exact-line match accuracy reaching 0.31. Caption specificity had a large effect: detailed captions (averaging 76 words) improved PSNR from 21.90 dB under semantic descriptions to 26.89 dB — a gain of nearly 5 dB — because terminal frames are governed primarily by text placement, and literal captions act as scaffolding for precise text-to-pixel alignment. One training dynamics finding worth noting: PSNR and SSIM plateau around 25,000 steps on CLIGen (Clean), with training up to 460,000 steps yielding no meaningful further gains.

On symbolic computation, arithmetic probe accuracy on a held-out pool of 1,000 math problems came in at 4% for NCCLIGen and 0% for base Wan2.1 — compared to 71% for Sora-2 and 2% for Veo3.1. Re-prompting alone, by providing the correct answer explicitly in the prompt at inference time, raised NCCLIGen accuracy from 4% to 83% without modifying the backbone or adding reinforcement learning. The research team interpreted this as evidence of steerability and faithful rendering of conditioned content, not native arithmetic computation inside the model.

NCGUIWorld addresses full desktop interaction, modeling each interaction as a synchronized sequence of RGB frames and input events collected at 1024×768 resolution on Ubuntu 22.04 with XFCE4 at 15 FPS. The dataset totals roughly 1,510 hours: Random Slow (~1,000 hours), Random Fast (~400 hours), and 110 hours of goal-directed trajectories collected using Claude CUA. Training used 64 GPUs for approximately 15 days per run, totaling roughly 23,000 GPU hours per full pass.

The research team evaluated four action injection schemes — external, contextual, residual, and internal — differing in how deeply action embeddings interact with the diffusion backbone. Internal conditioning, which inserts action cross-attention directly inside each transformer block, achieved the best structural consistency (SSIM+15 of 0.863, FVD+15 of 14.5). Residual conditioning achieved the best perceptual distance (LPIPS+15 of 0.138). On cursor control, SVG mask/reference conditioning raised cursor accuracy to 98.7%, against 8.7% for coordinate-only supervision — demonstrating that treating the cursor as an explicit visual object to supervise is essential. Data quality proved as consequential as architecture: the 110-hour Claude CUA dataset outperformed roughly 1,400 hours of random exploration across all metrics (FVD: 14.72 vs. 20.37 and 48.17), confirming that curated, goal-directed data is substantially more sample-efficient than passive collection.

What Remains Unsolved

The research team has honestly being direct about the gap between current prototypes and the CNC definition. Stable reuse of learned routines, reliable symbolic computation, long-horizon execution consistency, and explicit runtime governance are all open. The roadmap they outline centers on three acceptance lenses: install–reuse, execution consistency, and update governance. Progress on all three, the research team argues, is what would make Neural Computers look less like isolated demonstrations and more like a candidate machine form for next-generation computing.

Key Takeaways

Neural Computers propose making the model itself the running computer. Unlike AI agents that operate through existing software stacks, NCs aim to fold computation, memory, and I/O into a single learned runtime state — eliminating the separation between the model and the machine it runs on.

Early prototypes show measurable interface primitives. Built on Wan2.1, NCCLIGen reached 40.77 dB PSNR and 0.989 SSIM on terminal rendering, and NCGUIWorld achieved 98.7% cursor accuracy using SVG mask/reference conditioning — confirming that I/O alignment and short-horizon control are learnable from collected interface traces.

Data quality matters more than data scale. In GUI experiments, 110 hours of goal-directed trajectories from Claude CUA outperformed roughly 1,400 hours of random exploration across all metrics, establishing that curated interaction data is substantially more sample-efficient than passive collection.

Current models are strong renderers but not native reasoners. NCCLIGen scored only 4% on arithmetic probes unaided, but reprompting pushed accuracy to 83% without modifying the backbone — evidence of steerability, not internal computation. Symbolic reasoning remains a primary open challenge.

Three practical gaps must close before a Completely Neural Computer is achievable. The research team frames near-term progress around install–reuse (learned capabilities persisting and remaining callable), execution consistency (reproducible behavior across runs), and update governance (behavioral changes traceable to explicit reprogramming rather than silent drift).

Check out the Paper and Technical details. Also, feel free to follow us on Twitter and don’t forget to join our 130k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Need to partner with us for promoting your GitHub Repo OR Hugging Face Page OR Product Release OR Webinar etc.? Connect with us

Michal Sutter is a data science professional with a Master of Science in Data Science from the University of Padova. With a solid foundation in statistical analysis, machine learning, and data engineering, Michal excels at transforming complex datasets into actionable insights.