The ‘Bayesian’ Upgrade: Why Google AI’s New Teaching Method is the Key to LLM Reasoning

Large Language Models (LLMs) are the world’s best mimics, but when it comes to the cold, hard logic of updating beliefs based on new evidence, they are surprisingly stubborn. A team of researchers from Google argue that the current crop of AI agents falls far short of ‘probabilistic reasoning’—the ability to maintain and update a ‘world model’ as new information trickles in.

The solution? Stop trying to give them the right answers and start teaching them how to guess like a mathematician.

The Problem: The ‘One-and-Done’ Plateau

While LLMs like Gemini-1.5 Pro and GPT-4.1 Mini can write code or summarize emails, they struggle as interactive agents. Imagine a flight booking assistant: it needs to infer your preferences (price vs. duration) by watching which flights you pick over several rounds.

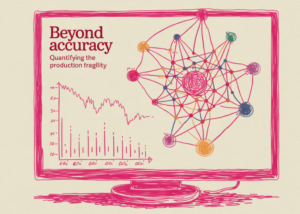

The research team found that off-the-shelf LLMs—including heavyweights like Llama-3-70B and Qwen-2.5-32B—showed ‘little or no improvement’ after the first round of interaction. While a ‘Bayesian Assistant’ (a symbolic model using Bayes’ rule) gets more accurate with every data point, standard LLMs plateaued almost immediately, failing to adapt their internal ‘beliefs’ to the user’s specific reward function.

Meet Bayesian Teaching

The research team introduced a technique called Bayesian Teaching. Instead of fine-tuning a model on ‘correct’ data (what they call an Oracle Teacher), they fine-tuned it to mimic a Bayesian Assistant—a model that explicitly uses Bayes’ rule to update a probability distribution over possible user preferences.

Here is the technical breakdown:

The Task: A five-round flight recommendation interaction. Flights are defined by features like price, duration, and stops.

The Reward Function: A vector representing user preferences (e.g., a strong preference for low prices).

The Posterior Update: After each round, the Bayesian Assistant updates its posterior distribution based on the prior (initial assumptions) and the likelihood (the probability the user would pick a certain flight given a specific reward function).

By using Supervised Fine-Tuning (SFT) on these Bayesian interactions, the research team forced the LLMs to adopt the process of reasoning under uncertainty, not just the final result.

Why ‘Educated Guesses’ Beat Correct Answers

The most counter-intuitive finding of the research is that Bayesian Teaching consistently outperformed Oracle Teaching.

In ‘Oracle Teaching,’ the model is trained on a teacher that already knows exactly what the user wants. In ‘Bayesian Teaching,’ the teacher is often wrong in early rounds because it is still learning. However, those ‘educated guesses’ provide a much stronger learning signal. By watching the Bayesian Assistant struggle with uncertainty and then update its beliefs after receiving feedback, the LLM learns the ‘skill’ of belief updating.

The results were stark: Bayesian-tuned models (like Gemma-2-9B or Llama-3-8B) were not only more accurate but agreed with the ‘gold standard’ Bayesian strategy roughly 80% of the time—significantly higher than their original versions.

Generalization: Beyond Flights to Web Shopping

For devs, the ‘holy grail’ is generalization. A model trained on flight data shouldn’t just be good at flights; it should understand the concept of learning from a user.

The research team tested their fine-tuned models on:

Increased Complexity: Moving from four flight features to eight.

New Domains: Hotel recommendations.

Real-World Scenarios: A web shopping task using real products (titles and descriptions) from a simulated environment.

Even though the models were only fine-tuned on synthetic flight data, they successfully transferred those probabilistic reasoning skills to hotel booking and web shopping. In fact, the Bayesian LLMs even outperformed human participants in some rounds, as humans often deviate from normative reasoning standards due to biases or inattention.

The Neuro-Symbolic Bridge

This research highlights a unique strength of deep learning: the ability to distill a classic, symbolic model (the Bayesian Assistant) into a neural network (the LLM).

While symbolic models are great for simple, codified tasks, they are notoriously difficult to build for ‘messy’ real-world domains like web shopping. By teaching the LLM to mimic the symbolic model’s strategy, it is possible to get the best of both worlds: the rigorous reasoning of a Bayesian and the flexible, natural-language understanding of a transformer.

Key Takeaways

LLMs Struggle with Belief Updating: Off-the-shelf LLMs, including state-of-the-art models like Gemini-1.5 Pro and GPT-4.1 Mini, fail to effectively update their beliefs as they receive new information, with performance often plateauing after a single interaction.

Bayesian Teaching Outperforms Direct Training: Teaching an LLM to mimic the ‘educated guesses’ and uncertainty of a normative Bayesian model is more effective than training it directly on correct answers (oracle teaching).

Probabilistic Skills Generalize Across Domains: LLMs fine-tuned on simple synthetic tasks (e.g., flight recommendations) can successfully transfer their belief-updating skills to more complex, real-world scenarios like web shopping and hotel recommendations.

Neural Models Are More Robust to Human Noise: While a purely symbolic Bayesian model is optimal for consistent simulated users, fine-tuned LLMs demonstrate greater robustness when interacting with humans, whose choices often deviate from their stated preferences due to noise or bias.

Effective Distillation of Symbolic Strategies: The research proves that LLMs can learn to approximate complex symbolic reasoning strategies through supervised fine-tuning, allowing them to apply these strategies in domains too messy or complex to be codified explicitly in a classic symbolic model.

Check out Paper and Technical details. Also, feel free to follow us on Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.