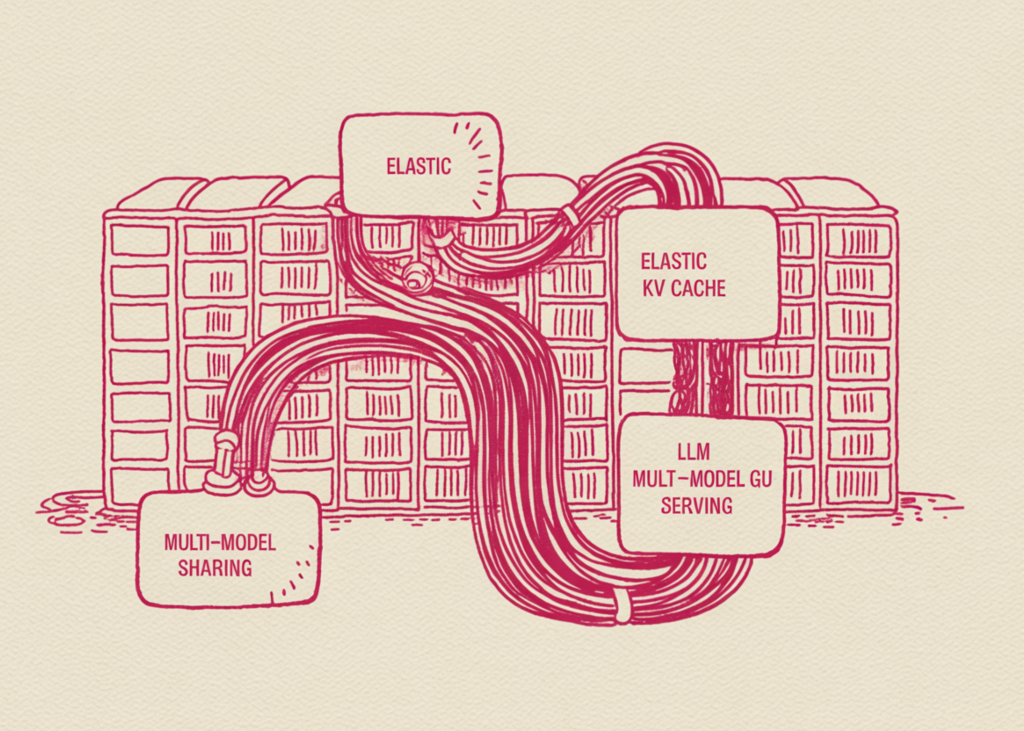

A Coding Implementation on kvcached for Elastic KV Cache Memory, Bursty LLM Serving, and Multi-Model GPU Sharing

import matplotlib.pyplot as plt

fig, axes = plt.subplots(1, 2, figsize=(14, 4.5))

tk, mk = zip(*mem_kvc); tb, mb = zip(*mem_base)

axes[0].plot(tk, mk, label=”with kvcached”, linewidth=2, color=”#1f77b4″)

axes[0].plot(tb, mb, label=”baseline (static)”, linewidth=2,

linestyle=”–“, color=”#d62728″)

axes[0].axhline(idle_kvc, color=”#1f77b4″, alpha=.3, linestyle=”:”)

axes[0].axhline(idle_base, color=”#d62728″, alpha=.3, linestyle=”:”)

axes[0].set_xlabel(“time (s)”); axes[0].set_ylabel(“GPU memory used (MB)”)

axes[0].set_title(“VRAM under a bursty workload\n(dotted = idle-baseline VRAM)”)

axes[0].grid(alpha=.3); axes[0].legend()

axes[1].boxplot([lat_kvc, lat_base], labels=[“kvcached”, “baseline”])

axes[1].set_ylabel(“request latency (s)”)

axes[1].set_title(f”Latency across {len(lat_kvc)} requests”)

axes[1].grid(alpha=.3)

plt.tight_layout()

plt.savefig(“/content/kvcached_single_model.png”, dpi=120, bbox_inches=”tight”)

plt.show()

print(“\n— Single-model summary ——————————————–“)

print(f” Idle VRAM kvcached: {idle_kvc:>6.0f} MB ”

f”baseline: {idle_base:>6.0f} MB ”

f”(savings: {idle_base – idle_kvc:>5.0f} MB)”)

print(f” Peak VRAM kvcached: {max(mk):>6.0f} MB ”

f”baseline: {max(mb):>6.0f} MB”)

print(f” Median lat. kvcached: {np.median(lat_kvc):>6.2f} s ”

f”baseline: {np.median(lat_base):>6.2f} s”)

print(f” VRAM flex kvcached: peak-idle = {max(mk)-min(mk):>5.0f} MB ”

f”(baseline can’t release — static pool)”)

print(“\n=== Experiment 3: Two LLMs sharing one GPU (kvcached on both) ===”)

pA, lA = launch_vllm(MODEL_A, PORT_A, kvcached=True, log_path=”/tmp/mA.log”)

try:

wait_ready(PORT_A)

pB, lB = launch_vllm(MODEL_B, PORT_B, kvcached=True, log_path=”/tmp/mB.log”)

try:

wait_ready(PORT_B)

print(f” Both models loaded. Idle VRAM: {vram_used_mb():.0f} MB”)

sampler = MemorySampler(); sampler.start()

for i in range(4):

port, model = ((PORT_A, MODEL_A) if i % 2 == 0

else (PORT_B, MODEL_B))

print(f” round {i+1}: driving {model}”)

bursty_workload(port, model, n_bursts=1, burst_size=4, pause=0)

time.sleep(5)

sampler.stop()

t, m = zip(*sampler.samples)

plt.figure(figsize=(11, 4.2))

plt.plot(t, m, color=”#c2410c”, linewidth=2)

plt.xlabel(“time (s)”); plt.ylabel(“GPU memory used (MB)”)

plt.title(“Two LLMs on one T4 via kvcached — memory flexes per active model”)

plt.grid(alpha=.3); plt.tight_layout()

plt.savefig(“/content/kvcached_multillm.png”, dpi=120,

bbox_inches=”tight”)

plt.show()

finally:

shutdown(pB, lB)

finally:

shutdown(pA, lA)

print(“\n=== Bonus: kvcached ships CLI tools ===”)

print(” kvtop — live per-instance KV memory monitor (like nvtop for kvcached)”)

print(” kvctl — set/limit per-instance memory budgets in shared memory”)

for tool in (“kvtop”, “kvctl”):

path = shutil.which(tool)

print(f” {tool}: {path or ‘not on PATH’}”)

print(“\nAll plots saved to /content/. Done.”)